Abstract

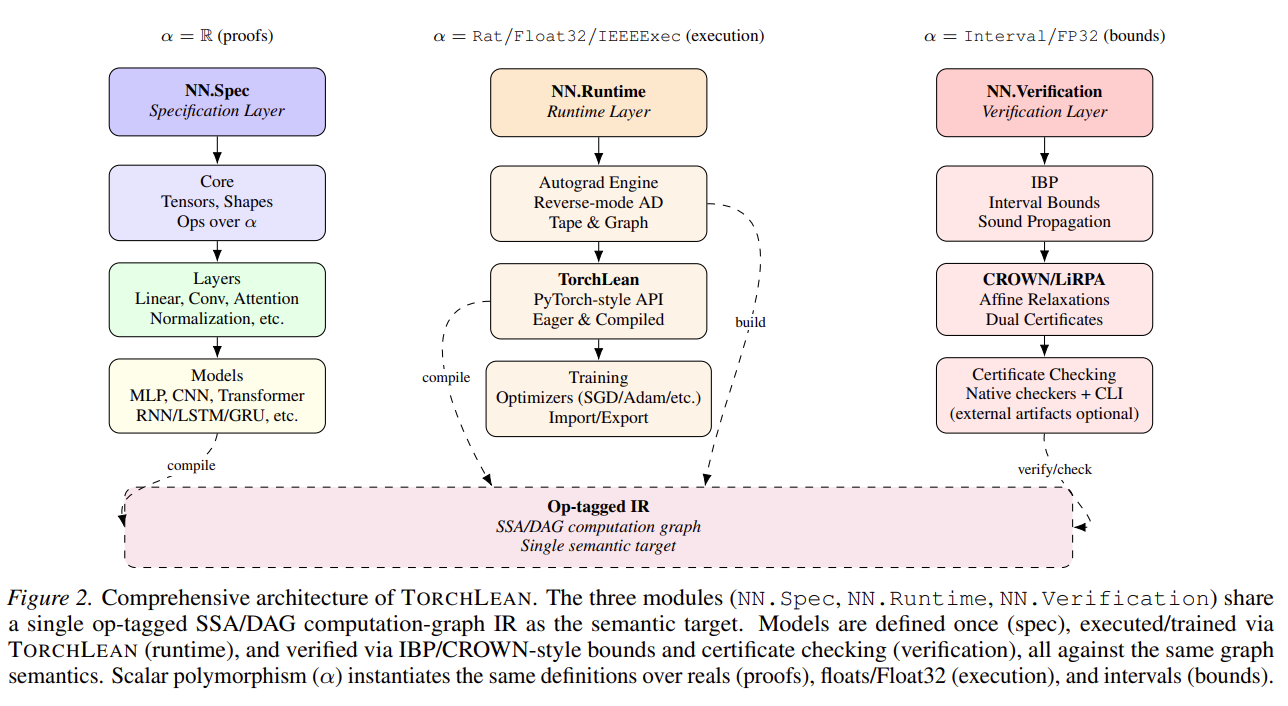

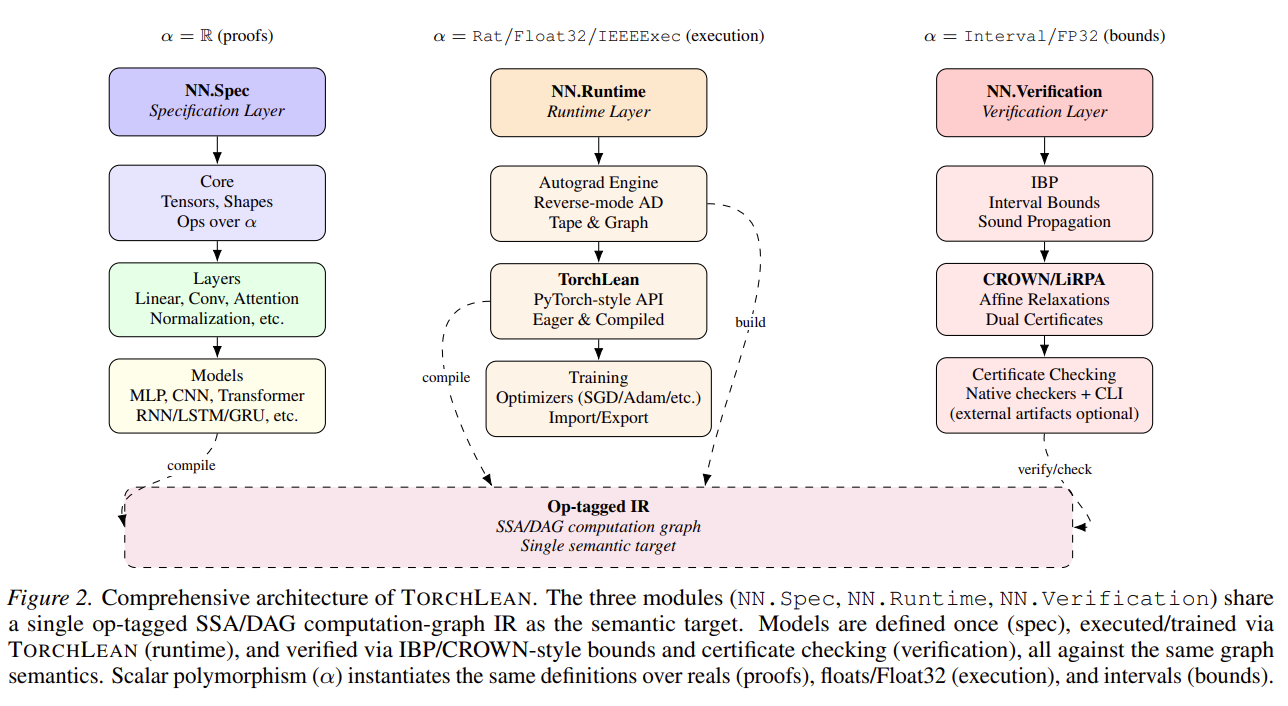

Neural networks are increasingly deployed through software stacks whose

behavior is defined by tensor layouts, preprocessing choices, compiler

boundaries, floating-point arithmetic, GPU kernels, and external model

exporters. TorchLean is a Lean 4 codebase for neural network specification,

execution, and verification that keeps those interfaces explicit. It gives

models a shared formal vocabulary for typed tensors, shapes, layers, graph

programs, objectives, gradients, finite-precision behavior, certificate

checking, and runtime trust boundaries. The current codebase includes a

PyTorch-style API, eager and compiled execution through an op-tagged SSA/DAG

graph IR, reverse-mode autograd, optimizers, CUDA/GPU execution with CPU

stubs and sanitizer/profiling support, PyTorch import/export workflows,

Float32/IEEE-754 semantics, IBP and CROWN/LiRPA-style verification, and a

model zoo covering MLPs, CNNs, ResNets, ViTs, GPT-style text models, GPT-2,

Mamba/SSM examples, diffusion, MAE-style self-supervised learning,

reinforcement learning, PINNs, and 3D-geometry certificate demos.